Designs for the touch-averse world

Last week we had a four-day remote hackathon at my place of work, Digital Catapult in London. I ended up winning four colleagues over to work on how to sketch designs for touch-free interfaces. The topic had emerged from analysing post-pandemic scenarios for, e.g. location-based immersive entertainment, and reports coming out from manufacturing industries about “air buttons” and similar.

Furthermore, there are signs of behaviour change around touch screens. According to a UK study by the design agency Foolproof:

“We found that 74% of people have either worn gloves to use a public touch surface, or wiped down a public touchpoint. We also found that 80% of people believe they will behave differently when interacting with public technology that they have to touch.”

The dawn of touch-free in public spaces

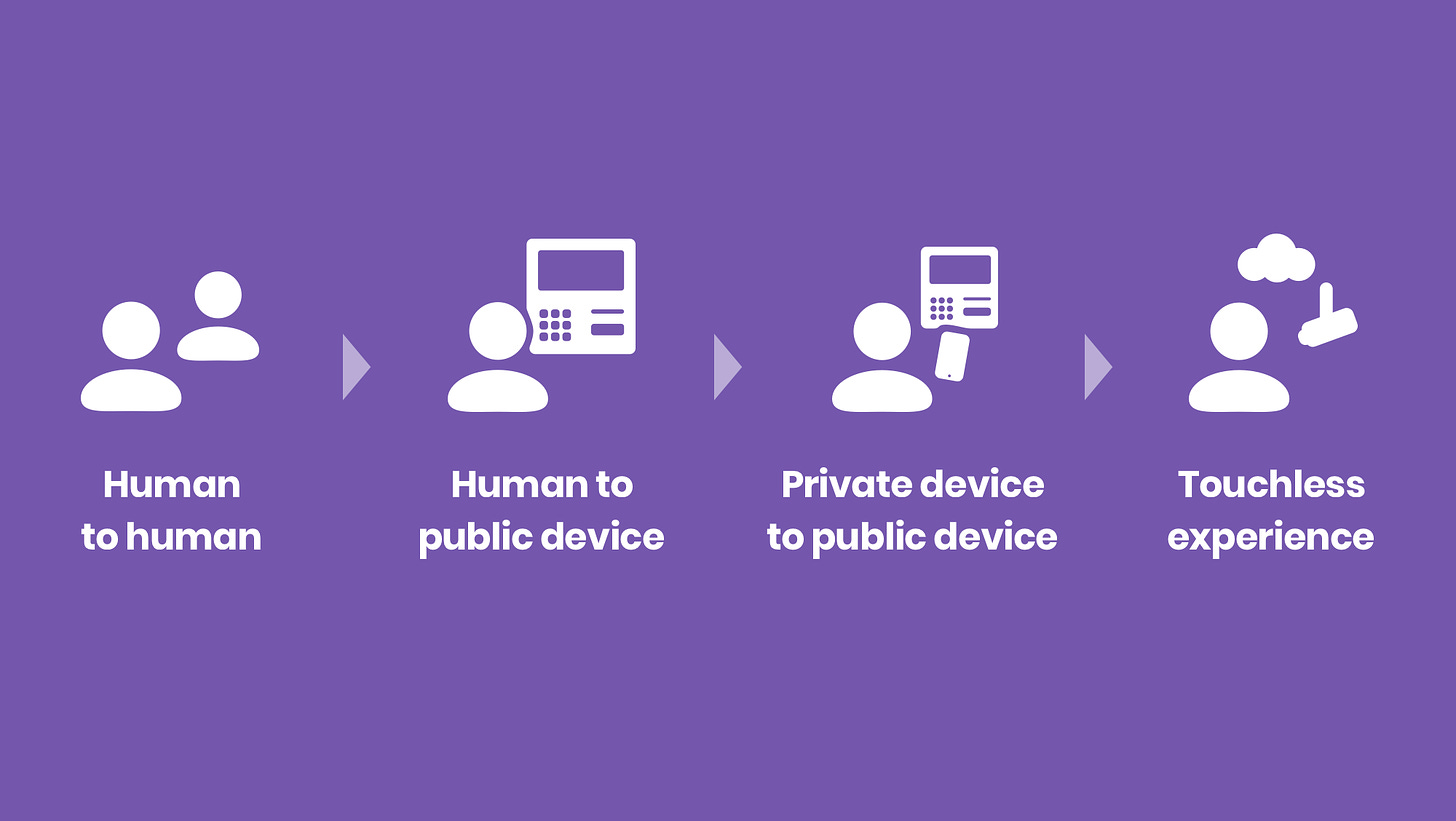

Using touchscreens will be a less attractive proposition for many user experiences in a post-pandemic world. We have to adopt other technologies for interacting with products, services, and fellow humans. Foolproof illustrates the potential trajectory towards less touch:

The ’private device to public device’ in the above image suggests that conducting even more interactions with our smartphones is the short-term solution. They are personal devices that we do not mind touching. From accepting payments to collecting cash from ATMs to scanning QR codes in public spaces, personal smartphones will work from distance and without touching something other people have touched.

However, it is in the interest of big tech (Apple, Google, Facebook, etc.) to move towards next-generation computing platforms to ensure market shares and keep consumers locked to their ecosystems. Of potential next-gen everyday computing technologies, spatial computing or “XR’ has some of the touch-free enabling tech built-in. Gesture detection, voice input, and eye-tracking will mature as basic functionalities within the next 2-3 years.

Therefore, I hypothesise that the question of touch-free will be solved more comprehensively once the smartphone era starts swindling down in 5 years or so.

Meanwhile, let’s think about the design premises for a touch-free - or, let’ say ‘touch-moderate’ future.

“Touch-free interfaces” - what are the options?

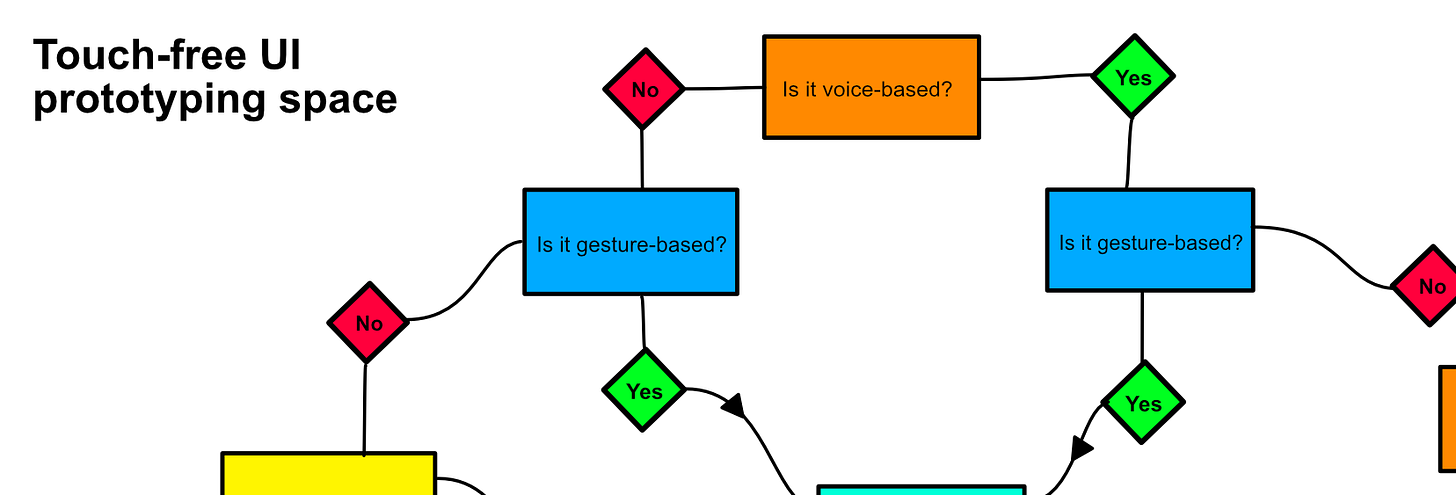

For the first day of our hackathon, we were asking that question, too. If touch is a no-no, what does that leave? Voice, gestures, gaze, iris or facial recognition. All of these have trade-offs, in terms of accessibility and inclusion, for example. The flowchart below reveals a glimpse of how we went about thinking the issue:

Use cases in public spaces both functional and recreational, from identification methods at ticket kiosks to visiting the gym, are likely to benefit from multi-sensory solutions. Thus, they will accelerate multi-sensory technologies that otherwise might have remained in the fringes while sight, sound, and touch dominated.

Leverage XR for prototyping

By the morning of the second day, we had reached more clarity. We decided to focus on ideas that were about self-service interfaces in public spaces. We refined a handful of ideas through sketching and proceeded to prototype them using XR tools.

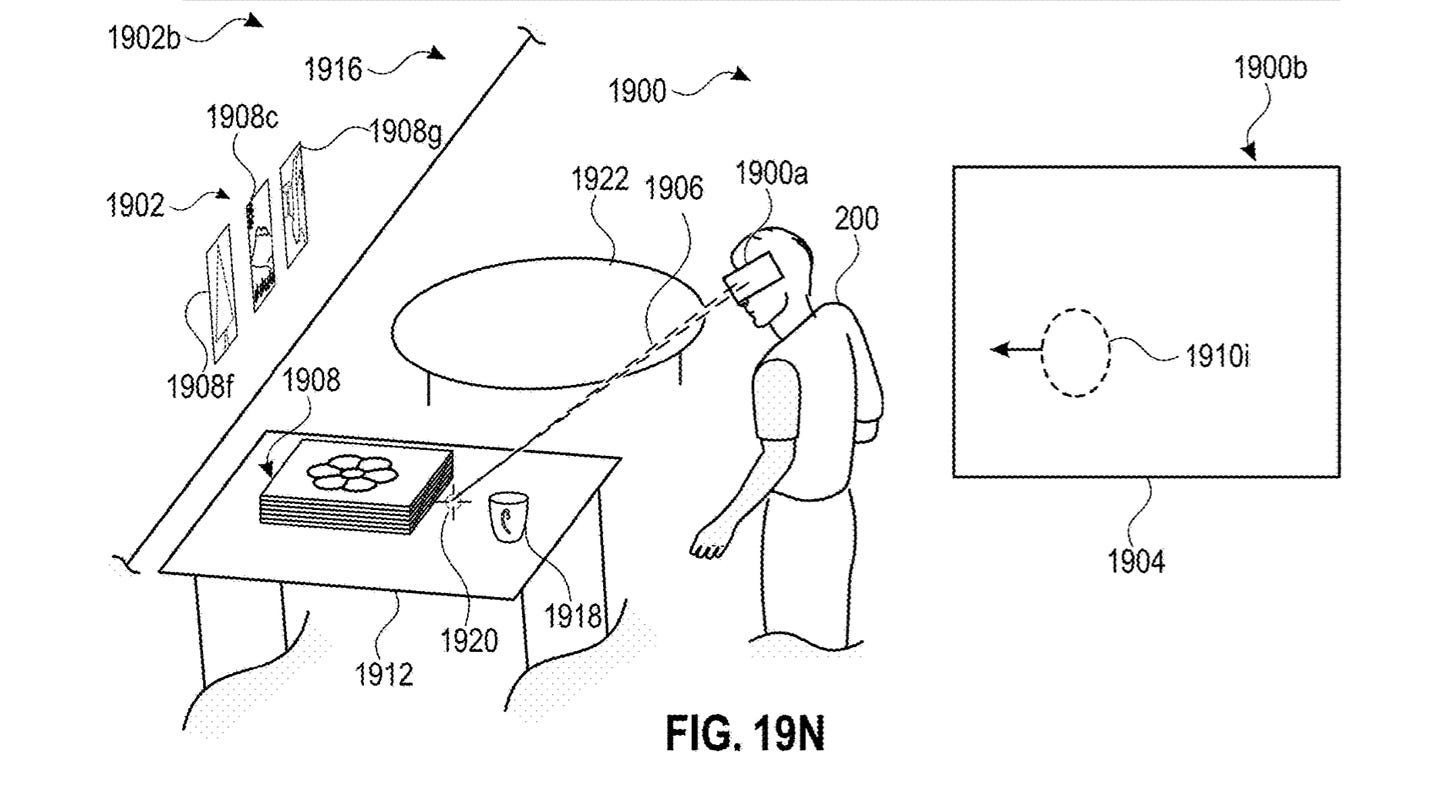

VR and MR are useful approaches to prototyping both gestural and voice-based interactions because headsets like Microsoft HoloLens and Oculus Quest track hands. With them, one can build embodied experiences of, e.g., touch-free interactions that take place in mid-air. Current AR less so, because the only readily available tools are smartphones and therefore fail to accommodate 3D interactions.

New design domain, new false positives

However, even with tools like the Quest and HoloLens, some shortcomings may or may not illustrate longer-term UC design challenges. One is hand occlusion, which became evident very early on in the interaction I began prototyping (see the header image). Pointing with one hand close to the other’s open palm made the latter disappear, thus losing an important (touch-free but proprioceptive) reference point to carry the interaction out.

Surfaces provide haptic feedback. The thing is that us interaction designers of the touch-concerned world cannot benefit from surfaces to the same extent than before. This means we will have to deal with a whole new complexity of false positives. Let’s take gaze-based interactions as an example. People by average blink their eyes 15-20 times a minute - what does that mean for the feasibility of interactions based on eye-tracking?

Touch-free does not necessitate wearables but it will benefit from them

You might think that all this touch-free world will have to wait until we have AR glasses in the mainstream. However, various sensor technologies are widespread already. Contactless payments and scanning barcodes at retail stores are examples of daily applications that will get a boost while haptic solutions have taken a setback.

Companies like UltraLeap, with their mid-air haptics, are in a position to find more traction than they could have asked for. Rolling out solutions to public spaces will take time though.

Next steps: Designing touch-free, multi-sensory user journeys

In the coming weeks, with my colleagues, we will be refining these reflections into formats that help businesses to identify solutions with which to transform their customer journeys into touch-free ones. Look out for more on the topic.

The touch-free future is not ready. Let’s start making it.

Stay safe,

Aki