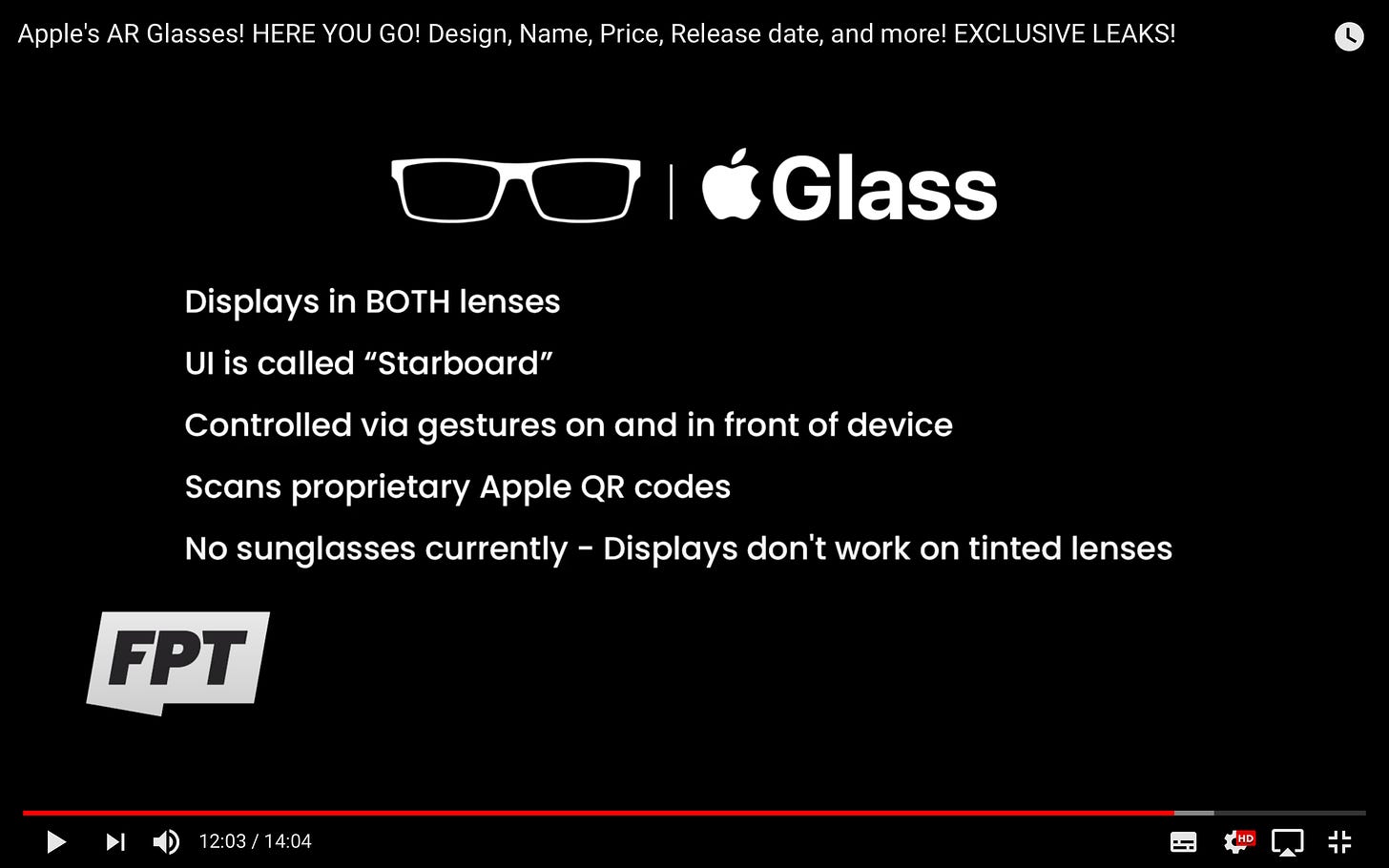

Image by Jon Prosser at Front Page Tech.

In part 1, I summarised some of the enabling tech and how making AR glasses is a series of trade-offs, and essentially pretty damn hard.

The posts I referred to in part 1 have a deliberate tech focus. The authors do not focus on how we would use AR glasses beyond vision. As far as I can tell, there is only one mention of an interaction functionality: tapping the frame on the right temple. Use cases are referred to in passing but primarily in terms of technical specifications rather than what designers could do with them.

For example, Field of view (FOV) is discussed but in absolute degrees, not concerning what kinds of design constraints the (limited) degrees introduce to crafting user experiences.

This is the motivation for this second part of my dive into the Apple Glasses speculation.We might again find value in looking at how the watch content and use cases have evolved - but once we reach beyond “data snacking” use cases, the analogy starts to fall apart. While displaying just-in-time information has its definite uses, AR glass app developers should be thinking beyond it to truly leverage the potential added value of augmented reality.

Smart glasses vs AR glasses: From data snacking to spatial computing

Chris Davies of SlashGear used the 1st-generation Focals North glasses for a year and reported about his experiences. They consisted of data snacking via either gaze or voice input or by using a ring-like controller.

“Focals have given me addicting glimpses of how that future will work. Having a notification float into view, and responding to it with a quick voice command, can be much quicker than pulling out my phone and doing it the traditional way.”

However, the important distinction here is that Focals 1.0 is not an AR device in the true meaning of the word: it does not display 3D content anchored into the world. Therefore concluding Davies’ experiences to what Apple Glass might be is relevant only in the contexts of device qualities (comfort, battery life, etc) and how information can be displayed in 2D form. North does not have an existing AR software development stack like Apple does (Google does with ARCore, though, and acquired North recently - effectively postponing what was supposed to be their 2.0 device).

When we move into the realm of spatial computing and true AR, many more challenges come into play. First of all, having the display in both eyes is not a given - as Karl Guttag notes: “The combination of dual displays and large eye boxes results in much more than 2X the power consumption, which in turn requires a bigger battery and heat management”. The benefit of the single-eye display is that it solves the need to support different IPDs (inter-pupillary distance).

But, display in both lenses is required for AR glasses to enable stereoscopic vision. Only then can 3D objects be displayed so that they appear to reside in space (optimally with IPD adjustment, either mechanically or electronically). Given Apple’s investment into ARKit as a development framework and Apple’s design philosophy, it would not sit well to provide a constrained, single-eye user experience, even as the first product iteration. So let’s assume both eyes for Apple Glass - as Prosser also says.

Design constraint #1: Field of view

A few years ago when I first tried Microsoft HoloLens, the FOV (30 horizontal degrees with the first version) did not factor into the experience before engaging with larger 3D models - initially playing around, just placing virtual dogs and flowers into my living room, seemed somewhat magical.

When I brought up the human anatomy models in the onboarding inventory, the experience immediately felt like looking into the augmented world through a letterbox. At the same time, the value-add of seeing the model in 3D and life-size scale was instant and intuitive. I remember thinking to myself that this is how these kinds of objects should be introduced in educational etc contexts.

Karl Guttag makes an educated guess that with Apple Glass the field of view will be between 35-and 50-degrees horizontally - to put this in context, our binocular FOV when the brain combines both eyes is around 120 degrees; the monocular areas cover up to 195. Apple Glass FOV will be seriously constrained, perhaps only slightly better than HoloLens and Magic Leap.

Image credit: Oliver Kreylos

Interaction designers will need to learn to work with the constraint. Microsoft calls it the “holographic frame”. In their design guidelines, they write how designers might

“feel the need to limit the scope of their experience to what the user can immediately see, sacrificing real-world scale to ensure the user sees an object in its entirety.” - Holographic frame - Mixed Reality | Microsoft Docs

However, rather than overloading the frame and scene with content, such as multiple small objects that fit the frame, designers should encourage users to move around, as this is the whole point of spatial computing from a UX point of view. That said, chunking content and information remains a tried and tested UX principle for AR as well, i.e. letting users “adjust to the experience”, as Microsoft’s HoloLens documentation says.

For example, for better UX:

designers should introduce a large-scale object first in a scale that fits the frame and then let the user initiate scaling into full size.

The movement to objects can be encouraged by both visual indicators and spatial sound.

If there are many objects in the scene, designers should think how they let the users understand the layout of the content and anchor it persistently to the real world before asking them to interact in more complex ways.

Design constraint #2: The gaze and display quality

The above are general AR UX desIgn principles that already apply with smartphone AR but become more important with limited FOV via eyeglasses. Our window to the augmented world is no longer an extension of our arm, but an extension of our gaze.

Microsoft on their Mixed Reality guidelines for the HoloLens:

“The holographic frame presents a tool for the developer to trigger interactions as well as evaluate where a user's attention dwells”

Before discussing the interaction design potential, let’s summarise what we can expect from the quality of the images themselves. Karl Guttag assumes that “Apple Glass appears to be using a (hologram) diffractive waveguide technology.” This technology would provide image quality better than the HoloLenses and Magic Leap. Guttag’s earlier (2018) comparison of a diffractive waveguide solution to HoloLens and Magic Leap is telling:

Image by Karl Guttag: Magic Leap, HoloLens, and Lumus Resolution “Shootout” (ML1 review part 3) – Karl Guttag on Technology

Better image quality contributes to a more satisfying UX and helps in the case for all-day wearability. Achieving that is the game-changer in terms of secondary revenue from apps and services, so no wonder Apple would be willing to concede hardware margins for better UX.

Besides the visual granularity, the question of gaze relates to interaction with the objects beyond vision. If the camera tracks the gaze, it will eventually track your hands - not necessarily in the first device generation (even if Prosser so claims), but perhaps by the 2nd or 3rd. This opens up the potential for gestural interactions beyond physically touching the glasses’ rims (as you do today with the AirPods).

Design constraint #3: Camera or not, and to what purposes?

In his posts, Karl Guttag ponders on the question of whether Apple Glass will have a camera or not, prompted by Prosser’s claim that there would not be one to mitigate privacy concerns. Kuttag notes how having no camera shuts out certain revenue streams - yet, Guttag falls on the side of “with a camera”.

However, privacy has become a strategy for Apple to differentiate from Facebook and Google. This does not mean outright that there would not be a camera: it could be one that the users or even developers don’t have direct access to - in a similar fashion as with VR headsets use cameras for position tracking but don’t have access for the pass-through image. Thus, the camera in the Glass could be available for image recognition (e.g. QR codes), possibly feeding data for Siri, but not for user-activated functionalities, such as taking photos etc.

Leaked pics from Apple's AR app Gobi - Constine's Moving Product newsletter

LiDAR sensor introduced to the 2020 model of iPad Pro enables scanning environments for faster and more accurate scene understanding, which is the foundation for augmenting the physical world with AR objects. Apple Glass will benefit from the LiDAR data Apple has collected from iPad Pro use, and therefore should gives developers and designers a more robust starting point for creating satisfying user experiences that don’t suffer from as many teething problems as smartphone AR. Provided that the 1st generation model has the sensor.

With the LiDAR sensor in place, the Apple Glass would scan the environment, presumably when the user so allows, as with current smartphone location services. Again, that comes with a trade-off with some battery duration.

Design constraint #4: New design patterns

We come to the question of display quality and how well the lenses can show virtual objects in different lighting conditions, against different backgrounds, etc. Guttag refers to North’s presentation about their Gen 2 glasses and how the examples point to data snacking in the confines of a limited resolution, similar to a smartwatch.

A new design pattern language likely needs to be developed for AR UX design. Apple will no doubt have their take on this with the “Starboard” UI, while Microsoft has been developing their own for their Mixed Reality solutions.

From a UX point fo view, almost as important as what the glasses can show, is that they are easy to turn off and/or elegantly “understand” when the user is not actively focusing on the information displayed. As Karl Guttag writes: “The most important thing for all-day wear is that when the glasses are “off,” they don’t cause vision problems.”

Design constraint #5: Interactions beyond the gaze

Any physical interactions with the glasses themselves with application content can suffer from accessibility issues - even the mentioned right temple in Prosser’s video sounds something that Apple would want to avoid unless both temples in the frames provide gestural interactions, similar to the AirPods.

Karl Guttag: “Putting the LiDAR on the right temple and would seem to be worse for left-handed people (about 10% of the population). Also, gesture input can be very problematic in real-world situations.”

I would push back in that sure, gesture input without physically touching the device is a challenge due to the immaturity of hand tracking. But, the AirPods and the Apple Watch have paved the way for simple double-taps that are becoming second nature for at least to the Apple audience who are using multiple devices (phone and/or watch or AirPods).

The first iteration of Apple Glass will not necessarily support hand tracking. Before it does, the simple “instinctual interactions” as Microsoft calls them will not come into play, and use cases veer towards data snacking. These use cases likely resemble how the Watch and iPhone apps complement each other currently. Standalone glass apps would require taking advantage of more advanced hardware features - such as hand tracking - to move beyond the smartglasses paradigm and leverage the full potential of AR. Probably, these hardware evolutions would not be in place before generation 2 or 3.

Design constraint #6: Voice input and output

The tech pieces I’ve referred to do not address audio capabilities at all. Given Apple’s history with music, it would be somewhat strange if audio would not play a significant part. Bose banked their foray into AR on audio but has shuttered down their AR operations since. Yet, the sound quality of their Frames was surprisingly good via the bone conduction headphone technology. For Apple, to keep the price down, supposedly the Glass would not have speakers to begin with - also to sell more AirPods?

Then again, Siri gets mentioned in the rumours, which is obvious given the breadth of potential for voice interface with a wearable device. North’s Focals reportedly have a well-functioning voice user interface. Apple Glass will surely have a microphone. Speakers, maybe once the reliance on the phone is over. Until then, designers should focus on use cases that leverage voice input, and give visual feedback e.g. to acknowledge user actions, instead of both audio and visuals.

Design constraint #7: Launch feature set vs roadmap

Returning to Davies’ year with the 1st-generation Focals North, he describes their feature roadmap:

“Focals launched with a relatively compact set of features, hitting what I’d say were the essentials: messages, turn-by-turn directions, weather reports, calendar reminders, and the ability to summon an Uber to your current location. The smart glasses also interacted with Amazon Alexa”.

What followed was a plethora of features, such as widgets for data snacking. However, this output was entirely dependent on what North themselves was able to produce. Apple’s app store and developer ecosystem will open the floodgates already before the device is out - we can expect guidelines for porting ARKit apps from their smartphone versions to the Glass be distributed to developers months before the hardware hits the market, most likely from the day of announcement onwards, for selected developers perhaps even before.

From that point onwards, it is dependent on the market success how the Glass will evolve through product generations.

2025: Year of AR

To end with, let’s summarise the key elements that AR glasses’ success - and subsequently augmented reality’s success in penetrating the mainstream for both business and pleasure - will hinge upon:

All-day wearability through comfort and display quality - 1st gen: check

Displays in both eyes for stereoscopic vision - 1st gen: check

Fast and accurate scene understanding via LiDAR - 1st gen: check

Voice input - 1st gen: check

Standalone processing power - future product generations

Audio output - future product generations

Hand tracking and 6 degrees-of-freedom (6DOF) interaction - future product generations

If Apple continues to invest in AR, my prediction is that Apple Glass will reach its maturity and mainstream breakthrough some 3-5 years after the initial launch.

In 2025, the iPhone will be 18 years old. A fitting time for a new generation.

Stay safe and wear a mask,

Aki